Risk-Based Autonomy Matrix: Map Ticket Risk to AI Actions

Most MSPs overcorrect on automation. Either they never turn it on past triage because of risk, or they over-automate and get burned by edge cases. The fix is boring and effective, a risk-based autonomy matrix that tells your AI exactly what it can do, when to ask, and when to escalate. Get that right and you reduce L1 grunt work without gambling on safety.

I learned this the hard way. You probably did too. Turn everything on and a weird license request at 11 pm goes sideways. Turn everything off and you are back to manual work by Monday. The middle path is where the margin lives, measured, gradual, and grounded by your ticket history.

Key Takeaways:

- Use a risk-based autonomy matrix to make autonomy granular, not binary

- Classify tickets by measurable risk signals from your PSA and IdP

- Learn approval routing from history instead of hand-writing policies

- Run a 14-day hypercare with rollback triggers before expanding scope

- Track five metrics that prove it is safe to widen autonomy

- Expect 40 to 70 percent less manual L1 work on low-risk requests in 60 days

Why Binary Automation Fails MSPs

Binary automation fails because tickets are not binary. Safe and risky requests look similar at intake, but diverge fast once you check identity, scope, and policy. A one-size switch guarantees mistakes or stalls. A granular model avoids both by mapping action to risk. Password resets are green, mailbox delegation might be yellow, license upgrades are red.

Edge Cases Are Normal, Not Exceptions

Edge cases show up daily, not yearly. New hires with duplicate emails, contractors with odd access, clients with quirky distribution groups. A user gets a new phone and forgets to transfer their authenticator. Or worse — they run a "cleaner" app that deletes it because they only used it "one time last month." Or — and this actually happens — they delete the authenticator because "the code kept changing." Every one of those becomes a ticket that looks simple but isn't, because SSPR doesn't cover MFA re-enrollment. When your model assumes perfect inputs, it fails. When your model expects noise, it catches it. That is why approval prompts, identity verification, and client-specific context need to sit in the path by default, not as afterthoughts.

Most MSPs treat the first miss as proof the bot is broken. I get it. A false positive hurts. What actually went wrong is the design. Risk signals were missing, or the action lacked a soft stop for human confirmation. Build soft stops where history says you need them, and you stop fearing edge cases. You plan for them.

A simple example helps:

- Green tickets, password resets, account unlocks with verified identity

- Yellow tickets, mailbox permissions and group changes with manager approval

- Red tickets, license changes or anything touching finance, always ask first

Day-One Value Dies In Workflow Builders

Time to value matters. Long builds kill momentum. If you have to author 50 workflows before you see a resolved ticket, you will stall. Your team will quit on it. Every MSP owner who has tried a workflow-heavy platform knows the pattern: optimistic kickoff, weeks of category mapping, stalled adoption, and then the verdict — "trainwreck." The next time someone mentions automation, the whole room flinches.

A better approach reads your ticket history, mirrors how you already work, and starts with low-risk autonomy while approvals and guardrails cover the rest.

I prefer systems that learn from reality. Historical tickets tell you who approves what for each client, what language signals approval, and where requests usually go wrong. That beats guessing. It also builds trust. Your techs see it acting like a junior coworker, not a brittle script farm.

Reframing Autonomy Around Risk, Not Work Type

Autonomy decisions should be risk-first, not work-type-first. Two password resets can have different risk based on identity verification and policy. Two license changes can be worlds apart based on cost impact and contract limits. A risk lens makes autonomy predictable. A task lens makes it random.

Risk Dimensions You Can Actually Measure

You do not need a PhD model. Just use signals you already have. Identity confidence from IdP, cost impact from license catalogs, authorization from org charts, data sensitivity from group membership, and urgency from impact rules. These are concrete and available in your stack today.

When you line up those signals, your matrix writes itself. Green for low sensitivity, verified identity, no cost. Yellow for medium sensitivity or mild cost with approval. Red for high sensitivity or high cost where a human must weigh tradeoffs. You can even point to docs that formalize some of these rules, like Microsoft’s guidance on self-service password reset, which explains the verification flow in detail for safe reset paths, see the Microsoft Entra self-service password reset overview at Microsoft Learn.

Ticket Features Hiding In Your PSA

Your PSA is a gold mine. Categories, subcategories, request keywords, requester role, client, department, disposition notes, and who approved last time are all sitting there. Most teams ignore half of it. Pull those features, label outcomes from closed tickets, and you have a practical classifier without heroics.

You will find consistent patterns. HR creates onboarding, finance requests mailbox access for auditors, field techs ask for VPN exceptions. Approvals usually tie to department heads or client admins. Use that intel. It is better than a hundred if-else rules you will never keep up to date.

The Cost Of Skipping A Risk-Based Autonomy Matrix

Skipping the matrix is expensive. Not abstract expensive. Dollars and hours. Most MSPs carry 200 to 400 L1 tickets a month at about 10 to 15 minutes each. That is 50 to 100 hours of labor, which works out to seven to fifteen thousand dollars per month at common loaded rates. Nothing fancy. Just math.

Where The Minutes Disappear

Minutes leak everywhere. Intake ping-pong, identity lookups, handoffs, approval chases, portal logins, documentation, and user updates. None of those steps are hard. They are just constant. Your best people resent it. Your clients do not value it. Your margins eat it.

Common time sinks include:

- Missing details at intake, two or three messages to clarify

- Manual identity checks, toggling across IdP and PSA

- Approval chasing, email threads no one reads

- Swivel-chair execution, five consoles for one change

- Ticket notes and status updates, twice if it reopens

What Leaders Actually Lose

Leaders lose more than hours. They lose after-hours coverage because no one wants the pager. They lose senior focus because L2 and L3 step in to clean up escalations. They lose credibility when the same basic request takes 20 minutes today and 2 minutes tomorrow. A consistent, risk-aware model forces consistency back into the system, especially when evaluating risk-based autonomy matrix.

I am not saying every vendor doc solves this, but even simple offboarding steps in official admin guides show how much is repeatable when you standardize, for example, Google’s instructions to suspend or restore a user in Admin console, see Google Admin Help. Your matrix does the same for autonomy. It removes variance.

What It Feels Like When Autonomy Goes Wrong for Risk-based autonomy matrix

Everyone remembers the first bad call. A license upgrade that hit the monthly bill without approval. A mailbox permission that exposed sensitive mail. Trust drops to zero. The team shuts autonomy off. You are back to manual within a day. Painful. Avoidable.

False Positives At 11 PM

Night shifts punish mistakes. A bot takes action on a ticket that looked like a reset but was actually a name change. The user gets confused, reopens. The client’s admin wakes up angry. Your on-call tech spends an hour unwinding what should have been a two-minute fix. A single approval gate would have prevented it.

This is exactly what makes MSP owners say "I’m not giving an AI write access to passwords." And they have a point. The answer isn’t "trust the bot." The answer is scoped permissions, identity verification before action, and approval gates for anything above green. A well-scoped AI with audit trails is often more secure than an overtired tech at 11pm who resets a password without verifying the caller because the queue is backing up.

I like to plot those moments. You will see the same pattern, thin signals, no approval prompt, and no scoped permission on the service account. Fix those three and your risk drops fast. Not to zero, but to livable.

Approvals Stuck In Someone’s Inbox

Approvals fail in email. That is the truth. People miss them. They forget context. They reply late. When the prompt lives in chat and includes the right context, approval cycle time collapses. Even better when the approver is inferred from history rather than guessed. Microsoft’s Approvals app shows the pattern for structured approvals in messaging tools, which is a decent mental model, see the Approvals guidance at Microsoft Learn.

You will still need human decisions for red tickets. The goal is to make that decision fast and auditable, with identity, impact, and requested change visible in one place. That is what reduces bounce backs.

Build A Risk-Based Autonomy Matrix That Actually Works

A useful matrix is simple. Four autonomy levels, clear guardrails, and signals pulled from systems you already own. Start with low-risk work, measure outcomes, then widen scope. If a rule causes rework, roll it back. You are not carving stone. You are tuning.

Define Four Autonomy Levels

Start by defining levels that everyone can remember. Low-friction semantics win. Put the labels in your runbook and in your PSA categories so people see them daily. You want techs and managers speaking the same language when they discuss scope.

Then write the model in plain English:

- Level 0, Always ask. High sensitivity or high cost. Human must approve.

- Level 1, Ask if uncertain. Medium sensitivity or mild cost. Learn approver from history.

- Level 2, Auto with guardrails. Low sensitivity, no cost, identity verified, log and notify.

- Level 3, Auto and close. Trivial changes like unlocks or SSPR-eligible resets, full audit.

Extract Features From History, Not Hunches

Pull six to ten features from your PSA and IdP that correlate with risk. Train your classifier on last quarter’s tickets. Tag outcomes as safe autonomous, safe with approval, or escalate. Hard code a few red lines where policy demands it. Everything else is data-driven, especially when evaluating risk-based autonomy matrix.

Useful, lightweight features to start with:

- Requester role, department, client

- Request type keywords and category

- Identity verification strength and device health

- Group sensitivity and data scope

- License cost impact and contract constraints

- Past approver for this request and client

Run a 14-day hypercare. Review every autonomous close daily. Document misses, tune rules, and set rollback triggers. If reopen rate spikes or an approver flags confusion, narrow the scope. When metrics stabilize, widen again. It is easier than it sounds once the feedback loop is tight.

Ready to see this working in the real world, not on a whiteboard, Learn more about Rallied AI.

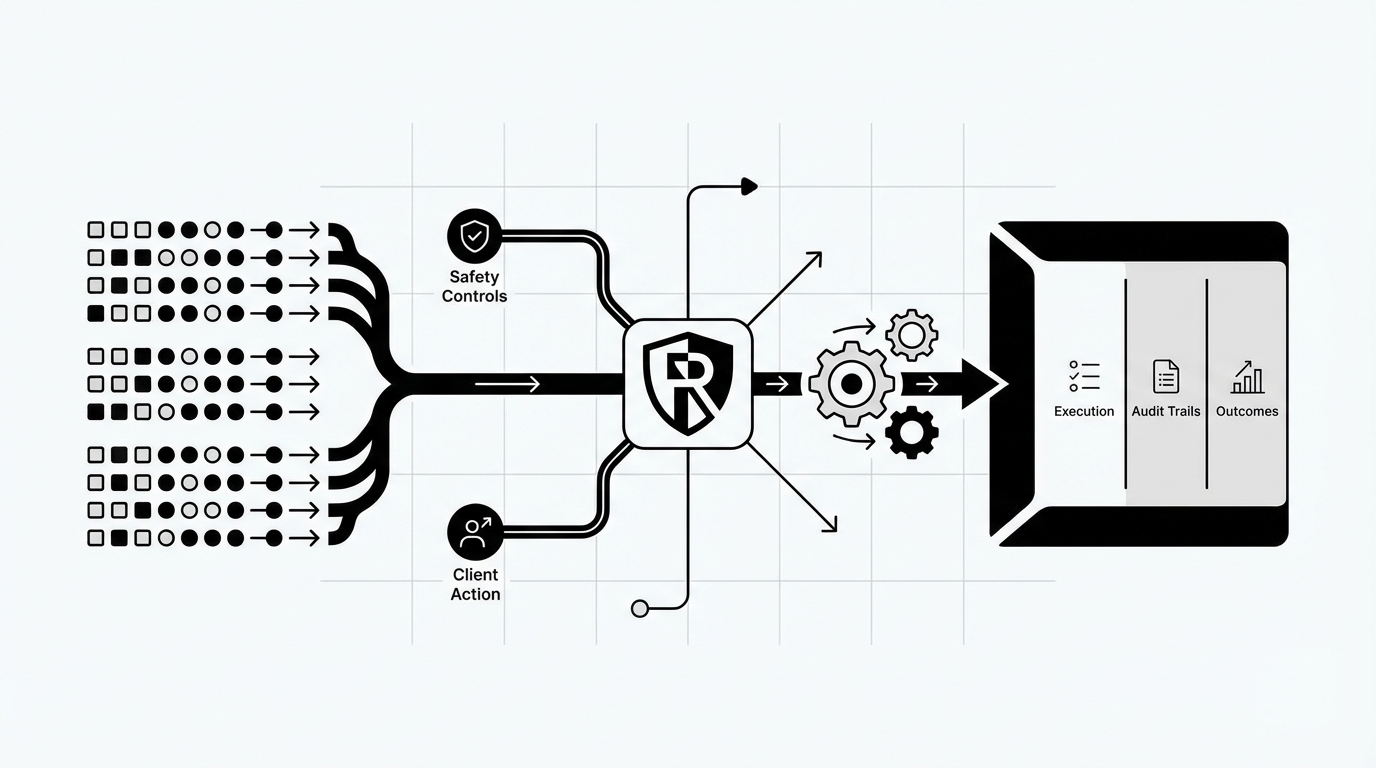

How Rallied Operationalizes Your Risk-Based Autonomy Matrix

Rallied turns the matrix into outcomes. It learns from your ticket history in a few days, applies safety controls you define per client and action, and executes across your stack with audit trails and chat-based approvals. Routine L1 tickets close in about 60 to 120 seconds, while higher-risk work routes for explicit approval.

Same-Week Learning And Guardrails

Rallied ingests historical PSA tickets to infer request types, approvers, and approval signals, then maps those to your IdP org data. That gives you a zero-config starting point that mirrors how your team already works. You decide what runs autonomously and what requires approval, per client and per action.

Safety is built in. Least-privilege service accounts, per-action approval gates, and full audit logs make autonomy governable. A 14-day hypercare plan lets you review outcomes weekly and expand scope safely. You will see early wins without betting the farm on day one.

Key capabilities that enable this:

- Zero-Config Learning from Ticket History, uses your data to mirror real approval patterns

- Safety Controls, Guardrails and Hypercare, enforce least privilege, approvals, and weekly reviews

- Rapid Deployment and Time-to-Value, connect your stack and start resolving L1 work the same week

Curious how the setup flows from kickoff to first closes, See how Rallied AI works.

Autonomous L1 Execution Across Your Stack

Once guardrails are set, Rallied acts. It reads the ticket, verifies identity against M365, Okta, JumpCloud, or Google Workspace, decides the right action, and executes across IdP, PSA, RMM, and SaaS. For consoles without APIs, it uses a secure browser agent with scoped accounts. Approvals happen inline in Slack or Teams, with clear context and audit.

This is not a summarizer. It is an AI technician. Password resets, unlocks, MFA re-enrollments, mailbox permissions, and simple license changes move from minutes to seconds. Updates post back to your PSA automatically. Users get clear instructions in the same chat where they asked for help.

Specific features at work:

- Autonomous L1 Ticket Resolution, end-to-end fixes with user comms and PSA updates

- Full-Stack Integrations, broad connections across PSA, RMM, IdP, docs, and chat

- Approval Routing, learned plus configured, so medium-risk work gets the right sign-off

- Browser Agent for No-API Actions, safe coverage for admin consoles that block programmatic access

- Conversational Interface in Slack or Teams, intake, approvals, and updates where people already are

Ambiguous problems get faster, too. Rallied runs cross-stack checks in parallel, correlates likely root causes, and either deploys a safe remediation via RMM or hands a fully enriched ticket to the right queue. During outages, it links duplicate tickets under a parent incident and posts status updates, which cuts duplicate triage.

Before you wrap this into next quarter’s plan, make it tangible, Get started with Rallied AI.

Conclusion

Binary on or off is the wrong frame. Risk-aware autonomy is the only frame that scales. Define four levels, learn approvals from history, verify identity and cost impact up front, and widen scope only when the metrics tell you it is safe. Do that and you cut 40 to 70 percent of manual L1 execution on low-risk classes inside 60 days without losing sleep over red tickets.

You do not need months of workflow building for this. You need the right signals and a system that learns how your shop already works, then acts with guardrails. That is the play. That is how you protect margins, keep SLAs healthy, and give your best people their time back.